ETL AND DATAWARE HOUSE TESTING

ETL Testing

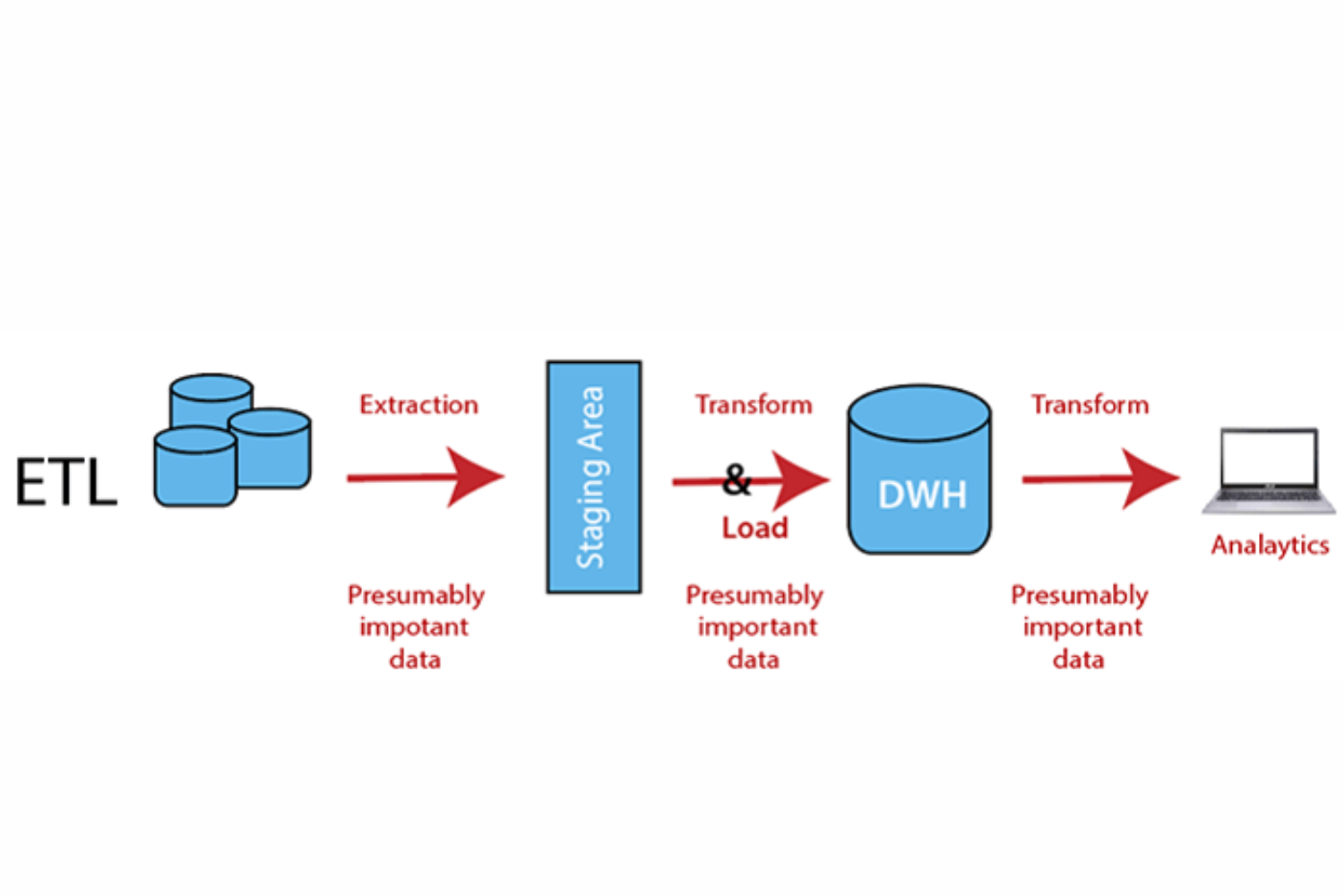

ETL testing verifies that an ETL procedure is correctly loading, transforming, and extracting data in accordance with the requirements. Validating and/or comparing the input and output data that the ETL process has transformed is how ETL testing is carried out. Data-centric projects with a large volume of data or a significant number of data pipelines use ETL testing.

Process of ETL Testing

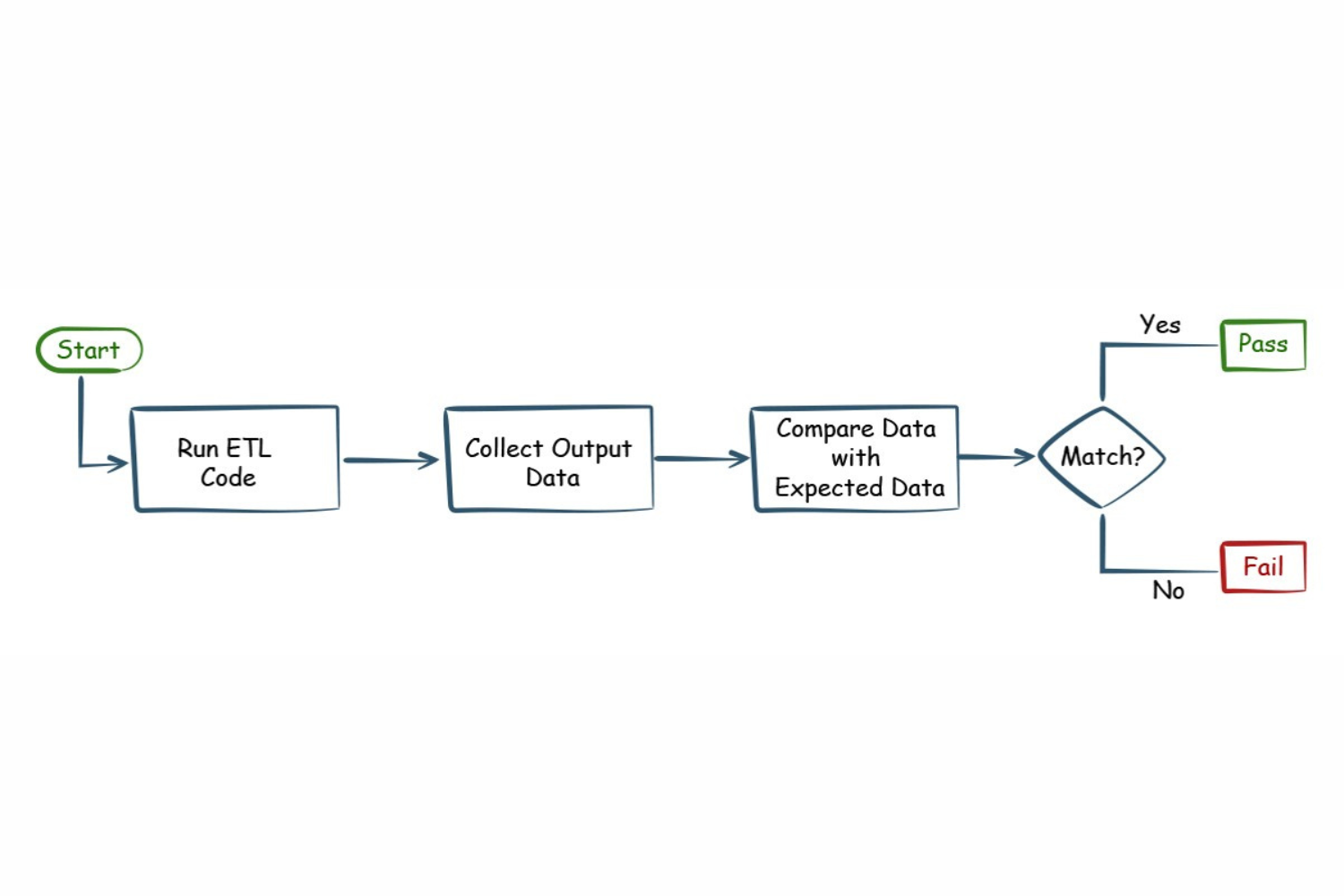

Through the use of the black box testing approach, which involves first running the ETL process to produce the output data and then verifying the data, the quality of the ETL process is assessed indirectly.

ETL testing process is summarized in the following three steps:

1. To create the output data, the ETL code must first be run.

2. Next, a comparison is made between the output data and the predefined expected data.

3. The comparison results are used to assess the ETL process’s quality.

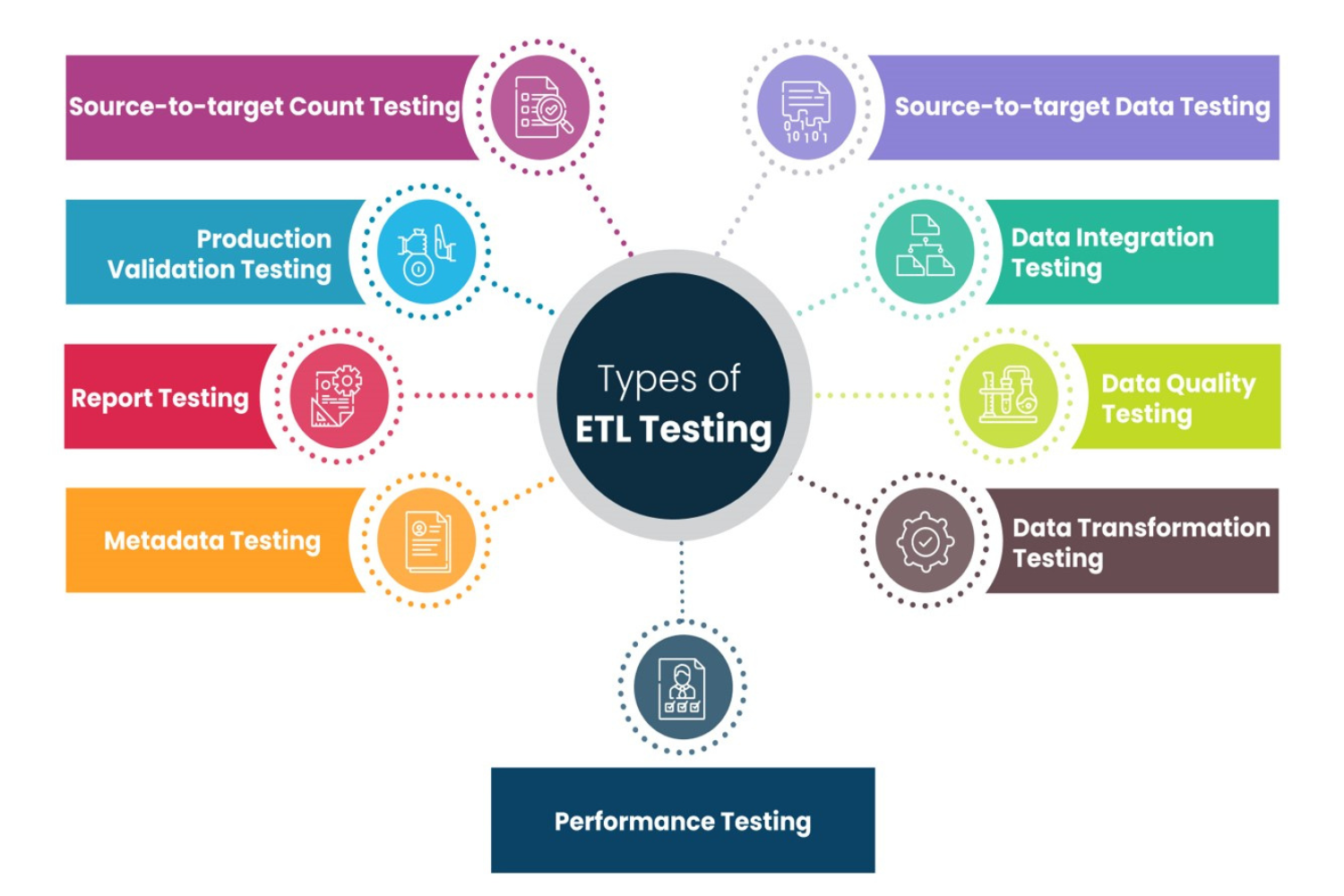

Types of ETL Testing

The types of ETL testing are listed below:

1.ETL Source Data Validation Testing

2.ETL Source to Target Data Reconciliation Testing

3.ETL Data Transformation testing

4.ETL Data Validation

5.ETL Referential Integrity Testing

6.ETL Integration Testing

7.ETL Performance Testing

8.ETL Functional Testing

9.ETL Unit Testing

10. ETL Validation

Scope of ETL Testing

The scope of ETL (Extract, Transform, Load) testing is extensive and vital for ensuring the quality and integrity of data throughout the ETL process, which involves extracting data from source systems, transforming it according to business rules, and loading it into a target data repository (e.g., data warehouse or database). ETL testing plays a crucial role in identifying and mitigating data issues and ensuring the accuracy and reliability of data for reporting and analysis. Here’s an overview of the scope of ETL testing:

1. Data loading and transformation are accurate.

2. Duplicate data is not produced during the ETL process.

3. The ETL process is executed in the correct sequence.

4. The data processing in the ETL process uses accurate incremental logic.

5. On errors, the ETL outputs the appropriate exit codes.

6. Data exceptions do not cause the ETL processes to crash.

7. Metadata about the ETL process is logged.

8. Ensure that ETL processes operate effectively in the production environment, monitoring and resolving operational issues.

9. Reconcile data after loading to identify and address discrepancies between source and target data.

Conclusion:

In conclusion, ETL testing is an iterative and ongoing process, crucial for maintaining data quality, ensuring data integrity, and supporting data-driven decision-making in organizations. It helps reduce the risk of inaccurate or incomplete data, which can impact business operations and reporting.

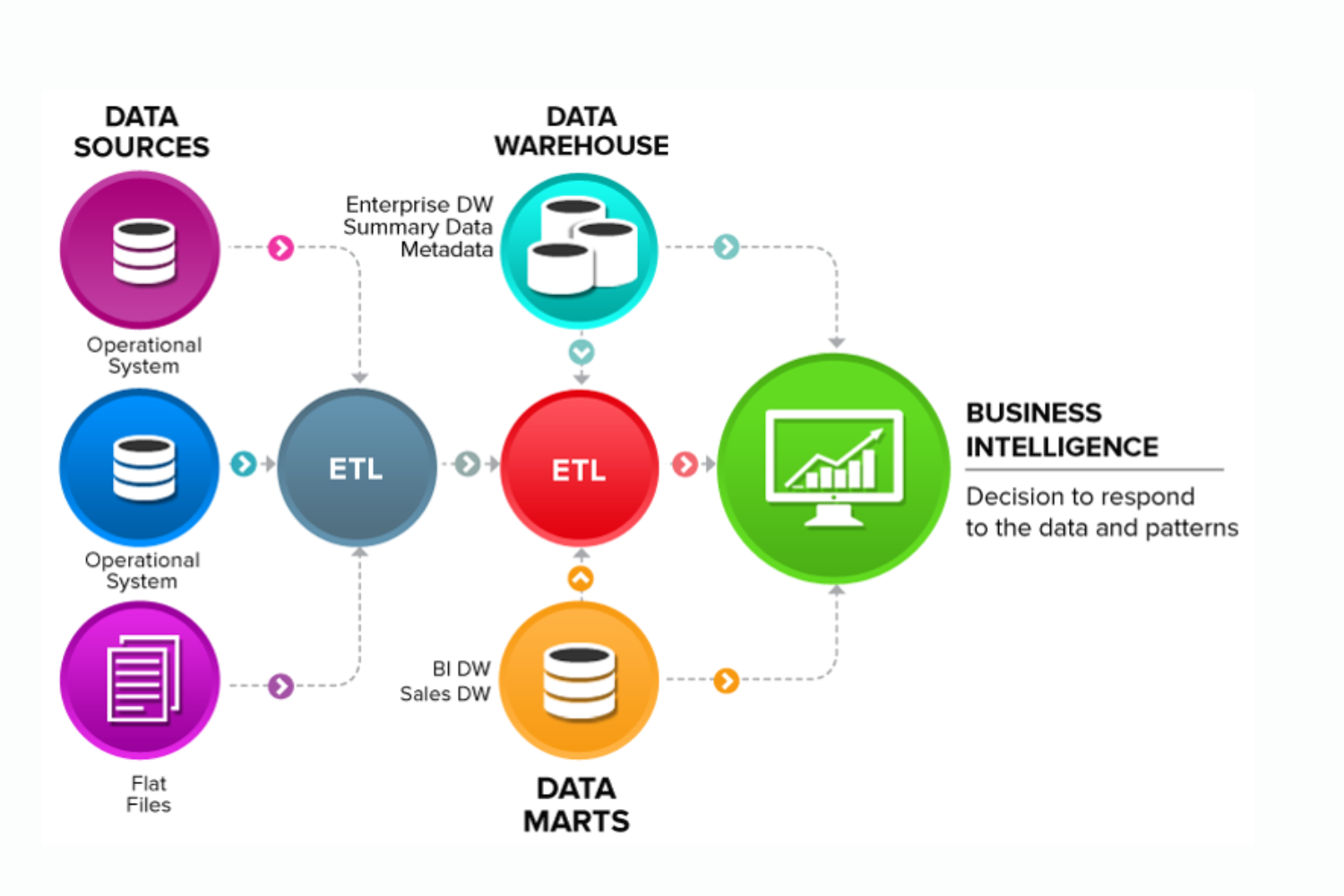

Data Warehouse Testing

Data Warehouse Testing is the process of systematically evaluating and validating the data within a data warehouse to ensure its accuracy, reliability, and quality. Data warehouses are designed to store and manage large volumes of data from various sources, making testing a critical step to ensure that the data is fit for reporting and analytics. Data Warehouse Testing is essential for data-driven businesses that depend on precise, timely, and trustworthy information to make decisions.

Key Aspects And Best Practices For Data Warehouse Testing

Data Extraction Testing: Verify that data is accurately and completely extracted from source systems.

Data Transformation Testing: Validate that data transformation rules and business logic are correctly applied.

Data Loading Testing: Confirm that data is loaded into the data warehouse without loss or corruption.

Data Quality Testing: Assess data accuracy, completeness, consistency, and reliability. Validate data validation rules, constraints, and integrity checks.

Performance Testing: Evaluate the performance of data loading and retrieval processes.

Conduct stress testing to assess scalability and response times for large data volumes.

Data Reconciliation: Compare data in the data warehouse to the source data to identify and address discrepancies.

Data Security and Compliance Testing: Assess data security measures within the data warehouse, including encryption, access controls, and user authentication.

Metadata Validation: Validate the accuracy of metadata, including data dictionaries, schema definitions, and data lineage documentation.

ETL Workflow Testing: Validate ETL workflow and job dependencies to ensure that processes execute in the correct sequence.

Data Integration Testing: Test end-to-end data integration scenarios to ensure data flows correctly across the data warehouse and other systems.

Operational Testing: Ensure that data warehouse processes operate effectively in the production environment, monitoring and resolving operational issues.

Data Quality Monitoring: Implement ongoing data quality monitoring to identify and address data quality issues as they arise.

Regression Testing: Conduct regression testing to ensure that data warehouse updates or changes do not introduce defects or data quality issues.

Query and Reporting Testing: Test SQL queries and reporting tools to ensure that they provide accurate results and meet business requirements.

User Acceptance Testing (UAT): Involve end-users and business stakeholders in UAT to ensure that the data warehouse meets their needs and expectations.

Data Masking and Anonymization: Implement data masking and anonymization techniques to protect sensitive or personally identifiable information in non-production environments.

Conclusion

Data warehouse testing is an iterative and ongoing process, ensuring that the data remains accurate and consistent over time. By conducting thorough testing and monitoring, organizations can maintain data quality, support informed decision-making, and drive business insights.